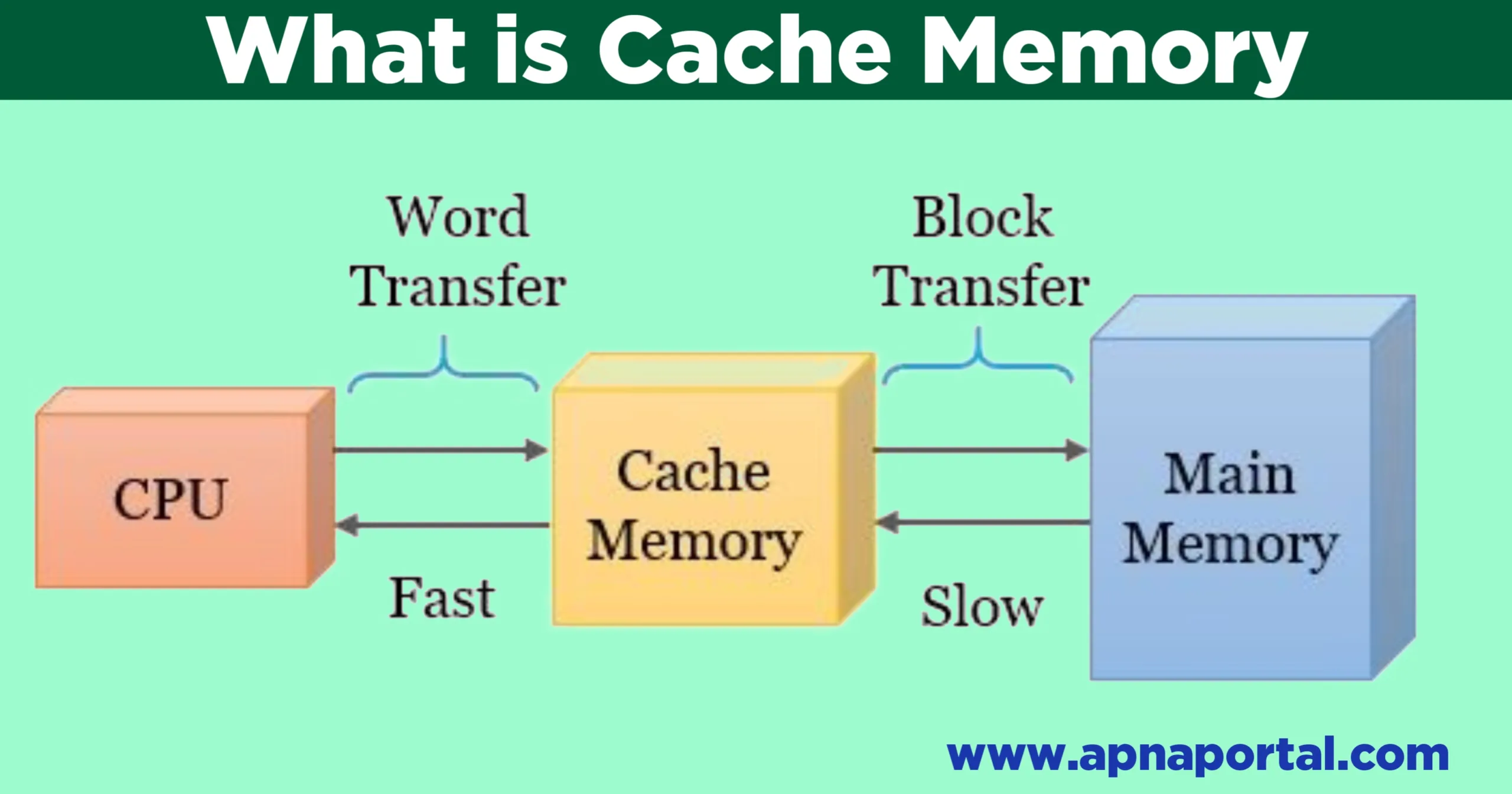

what is cache memory definition and types of cache memory

In this post we learn about what is cache memory and types of cache memory. what is cache memory Cache memory is a Static RAM which can be accessed easily and quickly by the processor. Cache memory stores the data that is used more frequently. Thus, the cache memory is the first place the processor … Read more